eBay Introduces the Listing Quality Report - Again

eBay has recently announced it is "introducing" the Listing Quality Report - presumably signaling that Harry Temkin and his team have brought this tool from an "early stages, work in progress" to a completed, tested, ready for Prime time product.

This report was first introduced during a demo in the December 2020 Seller Check-In and started being released to sellers in January 2021. Originally there was no mention of it being a work in progress, but as I took it for an initial spin, it became clear very quickly there was still a lot of work that needed to be done.

There were multiple examples of data that was inaccurate or missing, numbers that didn't add up, and even little things like consistency in icon usage appeared not to have been checked before pushing the report to production.

I documented the issues and gave extensive feedback in the eBay community and on social media, resulting in unprecedented engagement from Harry Temkin and the product team directly involved in creating this report - previous posts here, here, and here.

Listing Quality Report 2.0

Now that eBay is ready to release the Listing Quality Report into the wild, I figured it's time for a second look.

By way of full disclosure - the screenshots below are from a different account than the previous posts. It's also important to note that this is only one example from one seller and other sellers may or may not see the same things in their own reports.

As a refresher, here's how eBay describes this report:

The Listing Quality Report gives you a performance overview of your biggest 10 categories (based on number of live listings) and provides recommendations and benchmark data to help you improve your listings.

The report offers a clear picture of your performance and suggests ways you can improve your listings to help increase visibility and sales.

How are the opportunities calculated?

We take your listings and compare them to listings by other eBay sellers in this category, looking at information from the past 31 days.

We check if there is a big gap between top and bottom benchmarks.

If your benchmark is lower than the top - we show you the best opportunities to improve your position.

I'll start by saying the newest version of this report is a big improvement and many of the previous concerns seem to have been addressed.

One issue I see that may still be lingering is "column misalignment." When I had previously asked Harry Temkin why the data appeared to be incorrect in some sections, he said it was not a "formatting bug, but a mismatch of data."

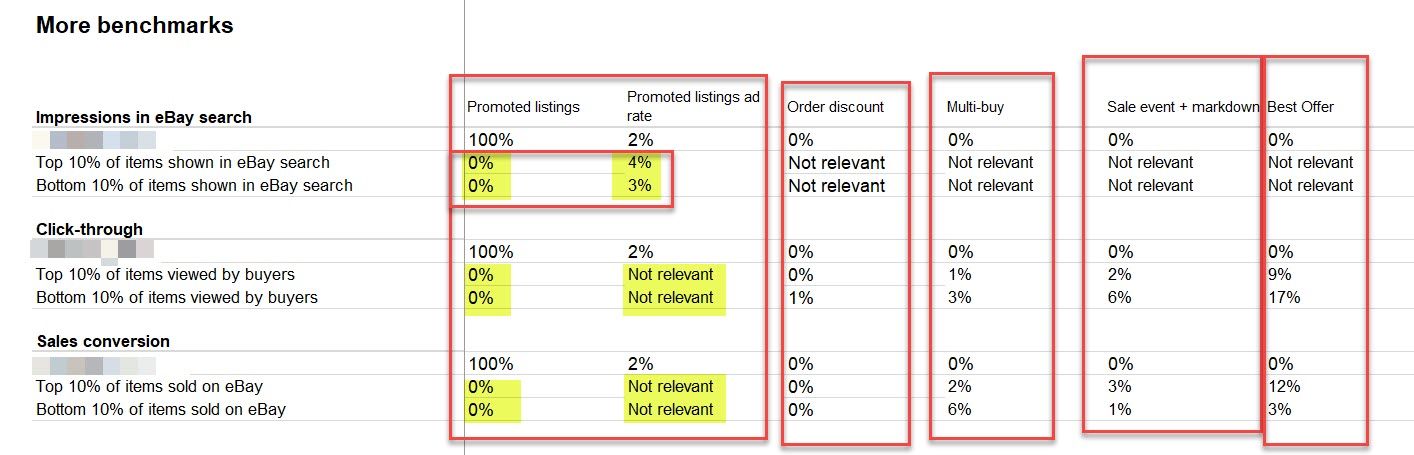

One example I had given of this kind of mismatch previously was that eBay showed 100% Promoted Listings usage with an average 0% ad rate, which is statistically not possible since the minimum ad rate is 1%.

In a similar vein, it now appears that for both the top and bottom 10% of competitors, eBay is showing 0% of listings using Promoted Listings but then lists an average rate percentage (in this example 4% and 3%) which again would not be possible if 0% of their listings were promoted.

This screenshot represents one category, but every other category in the report for this account looks the same - all of them show competitors with 0% Promoted Listings, but then showing a non-zero number for the average ad rate.

It's also interesting to note which kinds of promotions eBay is showing as "not relevant" - if this is accurate it would seem Promoted Listings only have an impact on Impressions, but not Click Through or Sales Conversion. All other discounts/promotions would appear to impact Click Through and Sales Conversion, but not Impressions.

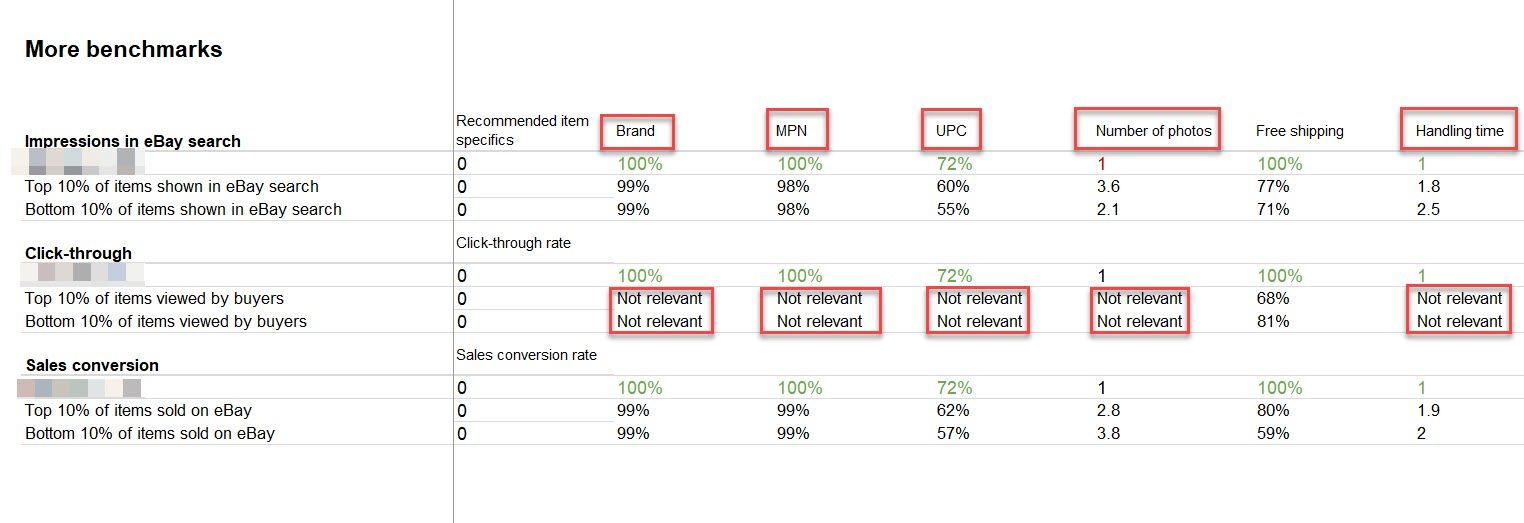

More interesting data as far as what eBay considers not relevant - some of these make sense, but as a fairly experienced online seller and buyer I have to admit that UPC has never been a factor in my personal sales conversion decisions nor have I ever seen it appear to have much impact on my customers.

As a bit of a data geek at heart, I think it would be really interesting to get a more in depth look at exactly how eBay determines this "relevancy", but I suspect it is proprietary and not something Harry and the team would readily share.

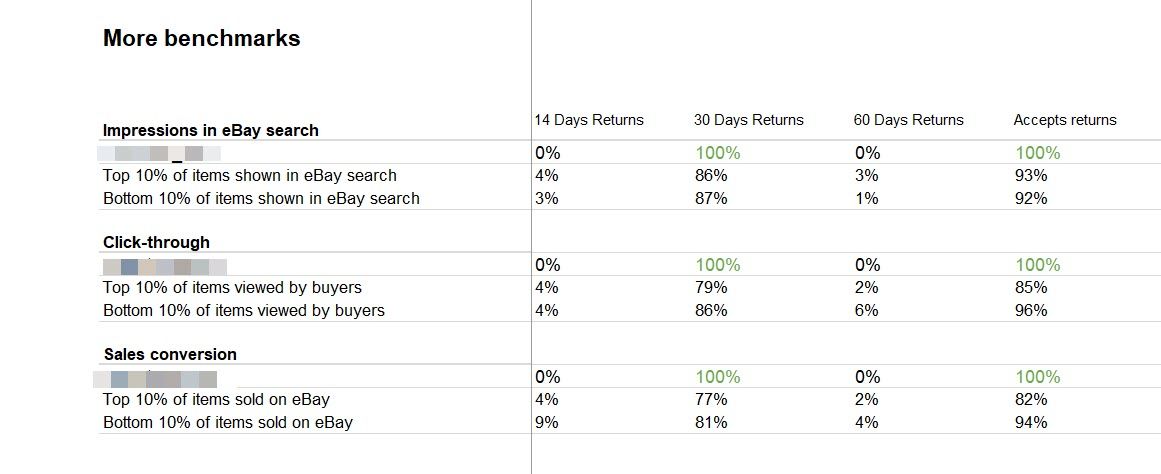

No real surprises in the data for returns - clearly 30 day returns are the established industry standard here and not much of a differentiator between top and bottom performers.

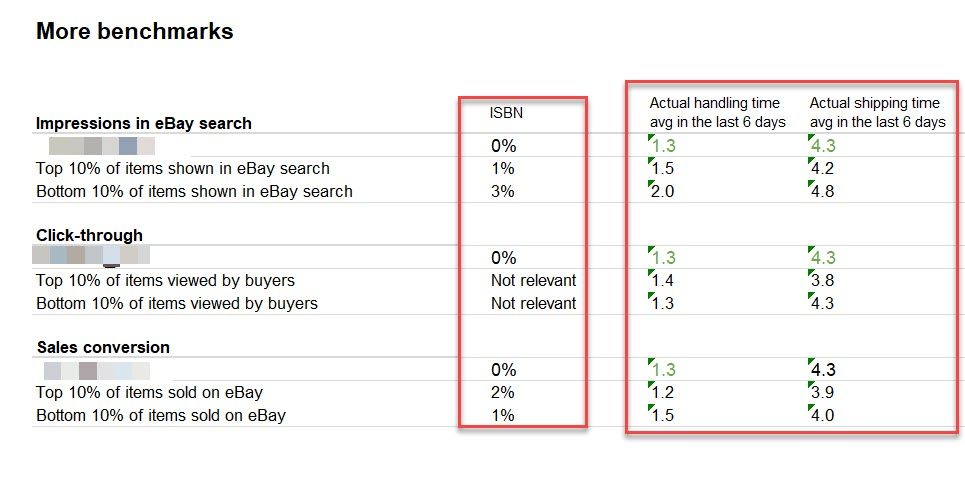

One new addition this this version of the report is the inclusion of actual average handling times and in transit times.

While it's understandable eBay may favor sellers with faster average shipping times in best match search (resulting in more impressions), I have to wonder about the effect on click through and sales conversions.

Buyers do not have any visibility to sellers' actual handling times or average shipping times in transit - all they see is the expected delivery date shown by eBay which eBay admits is derived from their own algorithms and "historical shipping times" for that seller, not what the seller actually sets for handling time on the listing or real time up to date time in transit estimates from the carriers.

Given the fact that the handling and shipping time calculation isn't transparent, it's difficult to determine whether or not that really has a clear impact on click throughs and conversions.

Either way, since it's something that is not completely within the seller's control, it seems a bit disingenuous to present this data as a "benchmark" where there might be an opportunity for a seller to improve their position.

Several sellers have reported seeing some major technical issues from eBay's changes to shipping estimates and the new "Delivery in 4 Days" badging that is replacing the "Guaranteed Delivery" program. One seller even provided an example where eBay was showing the "Delivery in 4 Days" badge on items they had set a 10 business day handling time on.

In a scenario like that where the seller has done everything possible to accurately communicate the shipping time but eBay circumvents it, how is it fair for the seller to be held to that false promise? For the purposes of this report - can we trust the average handling and shipping times to reflect relevant, accurate data on which to base rankings and recommendations?

Final Thoughts

Overall, the Listing Quality Report 2.0 seems to be an improvement as far as data provided, but I'm still left wondering exactly how a seller would find most of this information truly useful.

Many of the recommendations and opportunities boil down to things most sellers are already well aware that eBay recommends - Free/Fast Shipping, Free Returns, Increased Promoted Listings Rates, Discounts/Best Offers, Multiple Pictures, and Item Specifics.

In my experience as a seller, and from talking to other experienced sellers, my take is that many sellers are already doing these things and the ones who are not have chosen not to do so as a calculated business decision, not because they are unaware of what eBay advises.

If a seller has already determined any of those recommendations are not a good fit for their business model or are cost prohibitive, I don't see anything in this report that is likely to sway them.

I'll also admit to being a little disappointed that variation listings and auctions are still not included in this report. Harry Temkin specifically said support for variations was "on the road map" for improvements planned for January - March.

For the "finished product" release in May not to include them makes me wonder if they ran into unexpected issues implementing that road map and if so, did they just decide to push this project out the door as a "minimum viable product" and hope it would be good enough?